W3cubDocs

/NumPy 1.13numpy.histogram

-

numpy.histogram(a, bins=10, range=None, normed=False, weights=None, density=None)[source] -

Compute the histogram of a set of data.

Parameters: a : array_like

Input data. The histogram is computed over the flattened array.

bins : int or sequence of scalars or str, optional

If

binsis an int, it defines the number of equal-width bins in the given range (10, by default). Ifbinsis a sequence, it defines the bin edges, including the rightmost edge, allowing for non-uniform bin widths.New in version 1.11.0.

If

binsis a string from the list below,histogramwill use the method chosen to calculate the optimal bin width and consequently the number of bins (seeNotesfor more detail on the estimators) from the data that falls within the requested range. While the bin width will be optimal for the actual data in the range, the number of bins will be computed to fill the entire range, including the empty portions. For visualisation, using the ‘auto’ option is suggested. Weighted data is not supported for automated bin size selection.- ‘auto’

-

Maximum of the ‘sturges’ and ‘fd’ estimators. Provides good all around performance.

- ‘fd’ (Freedman Diaconis Estimator)

-

Robust (resilient to outliers) estimator that takes into account data variability and data size.

- ‘doane’

-

An improved version of Sturges’ estimator that works better with non-normal datasets.

- ‘scott’

-

Less robust estimator that that takes into account data variability and data size.

- ‘rice’

-

Estimator does not take variability into account, only data size. Commonly overestimates number of bins required.

- ‘sturges’

-

R’s default method, only accounts for data size. Only optimal for gaussian data and underestimates number of bins for large non-gaussian datasets.

- ‘sqrt’

-

Square root (of data size) estimator, used by Excel and other programs for its speed and simplicity.

range : (float, float), optional

The lower and upper range of the bins. If not provided, range is simply

(a.min(), a.max()). Values outside the range are ignored. The first element of the range must be less than or equal to the second.rangeaffects the automatic bin computation as well. While bin width is computed to be optimal based on the actual data withinrange, the bin count will fill the entire range including portions containing no data.normed : bool, optional

This keyword is deprecated in NumPy 1.6.0 due to confusing/buggy behavior. It will be removed in NumPy 2.0.0. Use the

densitykeyword instead. IfFalse, the result will contain the number of samples in each bin. IfTrue, the result is the value of the probability density function at the bin, normalized such that the integral over the range is 1. Note that this latter behavior is known to be buggy with unequal bin widths; usedensityinstead.weights : array_like, optional

An array of weights, of the same shape as

a. Each value inaonly contributes its associated weight towards the bin count (instead of 1). Ifdensityis True, the weights are normalized, so that the integral of the density over the range remains 1.density : bool, optional

If

False, the result will contain the number of samples in each bin. IfTrue, the result is the value of the probability density function at the bin, normalized such that the integral over the range is 1. Note that the sum of the histogram values will not be equal to 1 unless bins of unity width are chosen; it is not a probability mass function.Overrides the

normedkeyword if given.Returns: hist : array

The values of the histogram. See

densityandweightsfor a description of the possible semantics.bin_edges : array of dtype float

Return the bin edges

(length(hist)+1).See also

Notes

All but the last (righthand-most) bin is half-open. In other words, if

binsis:[1, 2, 3, 4]

then the first bin is

[1, 2)(including 1, but excluding 2) and the second[2, 3). The last bin, however, is[3, 4], which includes 4.New in version 1.11.0.

The methods to estimate the optimal number of bins are well founded in literature, and are inspired by the choices R provides for histogram visualisation. Note that having the number of bins proportional to

is asymptotically optimal, which is why it appears in most estimators. These are simply plug-in methods that give good starting points for number of bins. In the equations below,

is asymptotically optimal, which is why it appears in most estimators. These are simply plug-in methods that give good starting points for number of bins. In the equations below,  is the binwidth and

is the binwidth and  is the number of bins. All estimators that compute bin counts are recast to bin width using the

is the number of bins. All estimators that compute bin counts are recast to bin width using the ptpof the data. The final bin count is obtained from ``np.round(np.ceil(range / h))`.- ‘Auto’ (maximum of the ‘Sturges’ and ‘FD’ estimators)

- A compromise to get a good value. For small datasets the Sturges value will usually be chosen, while larger datasets will usually default to FD. Avoids the overly conservative behaviour of FD and Sturges for small and large datasets respectively. Switchover point is usually

.

. - ‘FD’ (Freedman Diaconis Estimator)

-

The binwidth is proportional to the interquartile range (IQR) and inversely proportional to cube root of a.size. Can be too conservative for small datasets, but is quite good for large datasets. The IQR is very robust to outliers.

- ‘Scott’

-

![h = \sigma \sqrt[3]{\frac{24 * \sqrt{\pi}}{n}}](https://docs.scipy.org/doc/numpy-1.13.0/_images/math/f7dc925b2da3af8c6d7b9730ecbd5e387753a866.png)

The binwidth is proportional to the standard deviation of the data and inversely proportional to cube root of

x.size. Can be too conservative for small datasets, but is quite good for large datasets. The standard deviation is not very robust to outliers. Values are very similar to the Freedman-Diaconis estimator in the absence of outliers. - ‘Rice’

-

The number of bins is only proportional to cube root of

a.size. It tends to overestimate the number of bins and it does not take into account data variability. - ‘Sturges’

-

The number of bins is the base 2 log of

a.size. This estimator assumes normality of data and is too conservative for larger, non-normal datasets. This is the default method in R’shistmethod. - ‘Doane’

-

![n_h = 1 + \log_{2}(n) +

\log_{2}(1 + \frac{|g_1|}{\sigma_{g_1}})

g_1 = mean[(\frac{x - \mu}{\sigma})^3]

\sigma_{g_1} = \sqrt{\frac{6(n - 2)}{(n + 1)(n + 3)}}](https://docs.scipy.org/doc/numpy-1.13.0/_images/math/b7318cd405779a78210d7be4ec1cc6d8c3c8ad32.png)

An improved version of Sturges’ formula that produces better estimates for non-normal datasets. This estimator attempts to account for the skew of the data.

- ‘Sqrt’

-

The simplest and fastest estimator. Only takes into account the data size.

Examples

>>> np.histogram([1, 2, 1], bins=[0, 1, 2, 3]) (array([0, 2, 1]), array([0, 1, 2, 3])) >>> np.histogram(np.arange(4), bins=np.arange(5), density=True) (array([ 0.25, 0.25, 0.25, 0.25]), array([0, 1, 2, 3, 4])) >>> np.histogram([[1, 2, 1], [1, 0, 1]], bins=[0,1,2,3]) (array([1, 4, 1]), array([0, 1, 2, 3]))

>>> a = np.arange(5) >>> hist, bin_edges = np.histogram(a, density=True) >>> hist array([ 0.5, 0. , 0.5, 0. , 0. , 0.5, 0. , 0.5, 0. , 0.5]) >>> hist.sum() 2.4999999999999996 >>> np.sum(hist * np.diff(bin_edges)) 1.0

New in version 1.11.0.

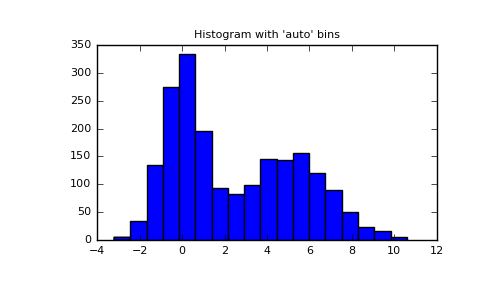

Automated Bin Selection Methods example, using 2 peak random data with 2000 points:

>>> import matplotlib.pyplot as plt >>> rng = np.random.RandomState(10) # deterministic random data >>> a = np.hstack((rng.normal(size=1000), ... rng.normal(loc=5, scale=2, size=1000))) >>> plt.hist(a, bins='auto') # arguments are passed to np.histogram >>> plt.title("Histogram with 'auto' bins") >>> plt.show()(Source code, png, pdf)

© 2008–2017 NumPy Developers

Licensed under the NumPy License.

https://docs.scipy.org/doc/numpy-1.13.0/reference/generated/numpy.histogram.html